Oct 10, 2019

Pro Tip: Tech Companies Should Stop Ogling Customers' Home Videos

, Bloomberg News

(Bloomberg Opinion) -- The watchwords these days for internet companies are transparency and control. Facebook Inc., Twitter Inc., Google and other companies want to make it more clear — in theory — what information they’re vacuuming up. And in theory, they’re letting people limit what data is collected or how the companies harness that information to target ads or improve their computerized systems.

This is all great, in theory. In practice, of course, transparency and control can be less than they seem. And even in principle, it sometimes misses the point. What if, in addition to transparency and control, internet companies deploy this sophisticated technology called “common sense” when they’re building and spreading their products?

For example, when Facebook got caught using phone numbers that people entered for account security to target ads, the company (belatedly) said it should have told users what it was doing.

“We’ll either have more disclosures and be very transparent about it, or we will no longer utilize it for ads,” Facebook executive Carolyn Everson told an interviewer last year. Twitter on Tuesday also disclosed it may have targeted ads based on the information people entered to keep their accounts secure. Twitter said this was an error, although the company didn’t say how long this practice had gone on.

For Facebook, the choice Everson sketched out was moot in the end. As part of a recent Federal Trade Commission settlement of an investigation into user privacy violations, Facebook committed to not using account-safeguard information for advertising.

The broader point lives on. Was the problem that Facebook (and Twitter) didn’t disclose this activity? Yes. The other problem is that the companies were using personal information in ways that people should not and could not reasonably expect.

Most people wouldn’t think that entering a phone number into their account for security purposes might be used for advertising. That’s a good signal that companies shouldn’t do it in the first place.

Ditto for the recent reporting about Facebook and other companies having humans transcribe text from users’ audio snippets. Yes, it was wrong that Facebook didn’t tell people who turned on an audio transcription feature in Messenger that humans might be listening to portions of their chats.

Dave Limp, the Amazon.com Inc. executive overseeing Alexa-powered devices, told tech news publication GeekWire on Wednesday that he wishes his company had been more transparent about the human reviewers of Alexa audio recordings. Hours later, Bloomberg News reported that Amazon workers review select video clips captured by the company’s Cloud Cam home security cameras.

Amazon does not explicitly tell people who own a Cloud Cam that humans are reviewing their video snippets to improve the device’s motion-detection software. Amazon said the video clips are provided voluntarily, but two sources told Bloomberg that the video review teams have picked up private activity of homeowners, including rare instances of people having sex.

Limp said at the GeekWire conference that Amazon considered allowing human review of audio clips from Alexa-powered devices only if the device owners explicitly agreed to it. Ultimately, he said, Amazon decided that human review is essential to improve the Alexa technology and said Amazon wants to use people’s data to improve technology for the company’s customers. Amazon, in short, has unilaterally decided that what’s best for people is to be poorly informed guinea pigs to improve an Amazon technology.

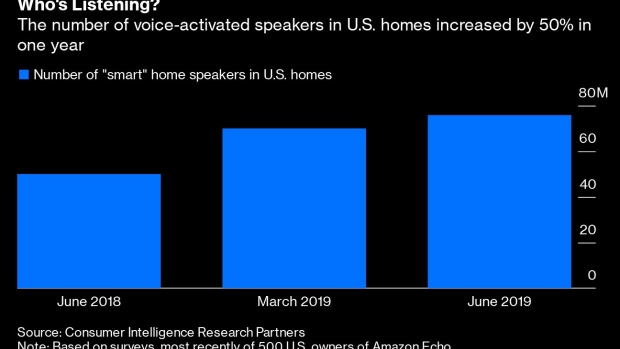

And regardless of what Facebook, Amazon, Twitter or other companies do or don’t disclose in the fine print, do people using audio transcription, motion detection video cameras and voice-activated assistants like Alexa and Apple Inc.’s Siri expect humans to listen to snippets of their conversations or watch clips of video filmed inside their homes? Of course not.

People might intuitively understand their voice recordings are stowed in a company database somewhere, but common sense should tell those companies not to do things people would not anticipate.

Companies shouldn’t just rely on disclosure or measures of control as a cure-all for aggressive use of people’s personal information. Yes, by all means companies shouldn’t use data without giving people an informed choice, but companies also shouldn’t do stuff in the first place that people wouldn’t expect. If Siri or Alexa stay a little dumb because they can’t harness people’s voice clips and home video to train them, so be it.

A version of this column originally appeared in Bloomberg’s Fully Charged technology newsletter. You can sign up here.

To contact the author of this story: Shira Ovide at sovide@bloomberg.net

To contact the editor responsible for this story: Daniel Niemi at dniemi1@bloomberg.net

This column does not necessarily reflect the opinion of the editorial board or Bloomberg LP and its owners.

Shira Ovide is a Bloomberg Opinion columnist covering technology. She previously was a reporter for the Wall Street Journal.

©2019 Bloomberg L.P.