Aug 11, 2022

UK’s Data Regulator Yet to Enforce Single Child Protection Case

, Bloomberg News

(Bloomberg) -- The UK’s data protection watchdog is yet to take action against any company for breaching rules designed to protect children from predatory data harvesting practices, two years after they were introduced.

The failure to make an example of companies violating the rules is indicative of the regulator’s shift to be more business friendly at a cost of upholding children’s privacy rights, say digital rights groups.

The Children’s Code came into force on in September 2020 with a 12-month transition period, during which companies had time to adapt their services to comply with the rules. The UK policy, which applies to children under 18-years-old, was supposed to force tech firms to build in protections by default, and explain privacy settings in a way a child would understand.

Although not a piece of legislation on its own, it provides guidance for interpreting how the Data Protection Act -- the UK’s implementation of Europe’s General Data Protection Regulation -- should be applied to digital services aimed at children. Breaking such rules risks penalties of up to 4% of annual global revenue.

Just before the grace period ended in September 2021, children’s rights group 5Rights submitted 250 examples of noncompliance by 55 companies to the ICO, highlighting “systemic breaches” of the Age Appropriate Design Code. Since then, the ICO has contacted many of the companies highlighted, but hasn’t publicly disclosed which companies, said digital rights groups.

The UK’s Information Commissioner, John Edwards, said that many companies have voluntarily made changes to their services to comply with the code, resulting in children being “better protected online in 2022 than they were in 2021.”

“There’s more for us to achieve,” he said. “We are currently looking into how over 50 different online services are complying with the code. We’ve got ongoing investigations. And we’ll use our enforcement powers where they are required.”

Hefty Fines

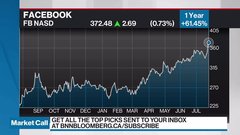

In the European Union, Meta’s Instagram could be facing a hefty fine by its main EU data protection watchdog later this month over the way children’s data is being treated by the service.

The Irish Data Protection Commission has two probes open into Instagram. A fine could soon be issued in one of the probes after the bloc’s privacy watchdogs last month resolved a dispute over the Irish authority’s draft decision in the case. TikTok is also facing scrutiny from European watchdogs.

While some of the larger platforms including Instagram, TikTok and YouTube made changes to how they handle children’s data and account settings in the UK, including defaulting teen accounts to private, groups focused on children’s digital rights have seen no evidence of enforcement against companies that don’t comply.

“The lack of dissuasive or public enforcement of data protection law by the ICO means the law is a paper tiger with no teeth,” said Jen Persson, director of Defend Digital Me, a UK-based civil liberties group that focuses on children’s rights to privacy and free expression.

The wording of GDPR states that fines and penalties should be dissuasive, but the lack of transparency over ICO investigations and their outcomes contributes to the sense among advocacy groups that the regulator has yet to follow through on enforcing its recommendations.

“We are completely in the dark although we initiated the process,” said Duncan McCann from 5Rights, referring to the research his organization submitted to the ICO. “They are keeping their cards super close to their chest with all their investigations.”

©2022 Bloomberg L.P.